- Description:

Stanford Online Products Dataset

Additional Documentation: Explore on Papers With Code

Source code:

tfds.datasets.stanford_online_products.BuilderVersions:

1.0.0(default): No release notes.

Download size:

2.87 GiBDataset size:

2.89 GiBAuto-cached (documentation): No

Splits:

| Split | Examples |

|---|---|

'test' |

60,502 |

'train' |

59,551 |

- Feature structure:

FeaturesDict({

'class_id': ClassLabel(shape=(), dtype=int64, num_classes=22634),

'image': Image(shape=(None, None, 3), dtype=uint8),

'super_class_id': ClassLabel(shape=(), dtype=int64, num_classes=12),

'super_class_id/num': ClassLabel(shape=(), dtype=int64, num_classes=12),

})

- Feature documentation:

| Feature | Class | Shape | Dtype | Description |

|---|---|---|---|---|

| FeaturesDict | ||||

| class_id | ClassLabel | int64 | ||

| image | Image | (None, None, 3) | uint8 | |

| super_class_id | ClassLabel | int64 | ||

| super_class_id/num | ClassLabel | int64 |

Supervised keys (See

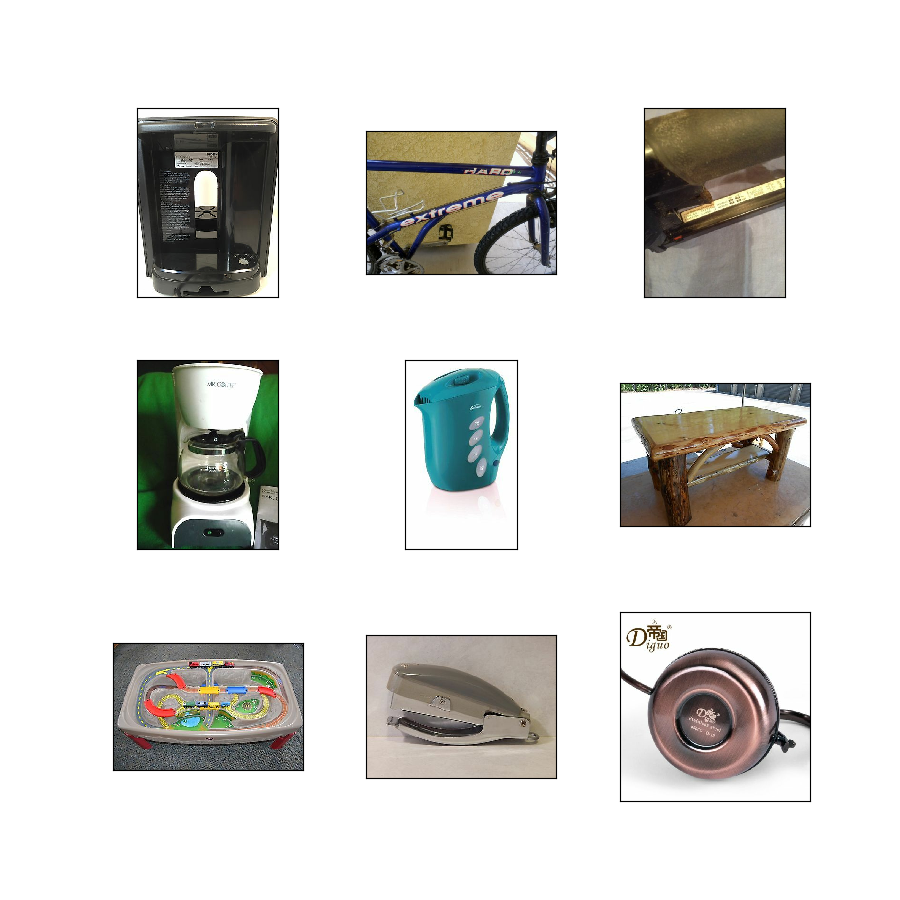

as_superviseddoc):NoneFigure (tfds.show_examples):

- Examples (tfds.as_dataframe):

- Citation:

@inproceedings{song2016deep,

author = {Song, Hyun Oh and Xiang, Yu and Jegelka, Stefanie and Savarese, Silvio},

title = {Deep Metric Learning via Lifted Structured Feature Embedding},

booktitle = {IEEE Conference on Computer Vision and Pattern Recognition (CVPR)},

year = {2016}

}